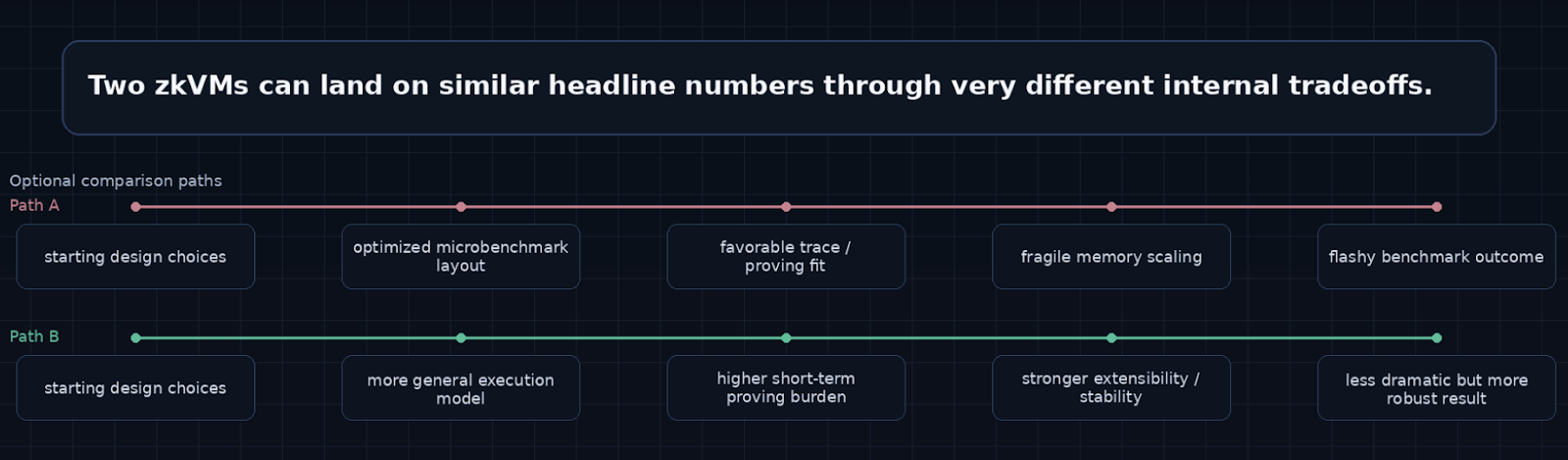

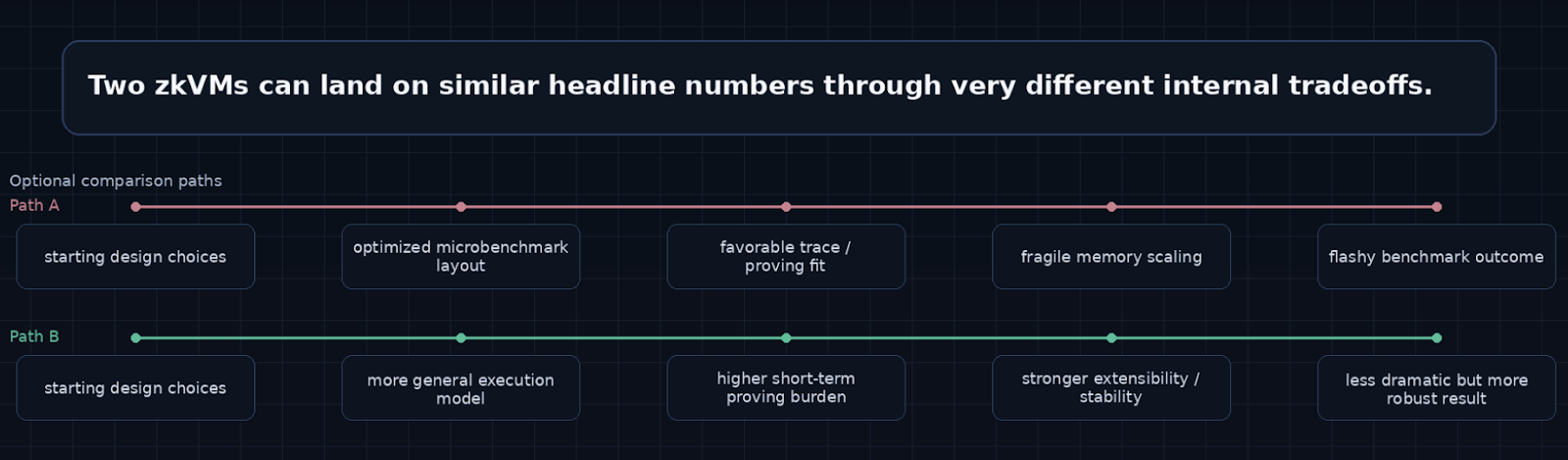

The zkVM market is often discussed as if it were based on a simple scoreboard: proving time, proof size, verifier cost, and maybe recursion performance. Two zkVMs can produce similar headline numbers while being built on entirely different assumptions about instruction semantics, memory handling, trace structure, recursion, and long-term extensibility. Looking only at the benchmark layer flattens the real engineering differences between systems, making them appear more interchangeable than they really are.

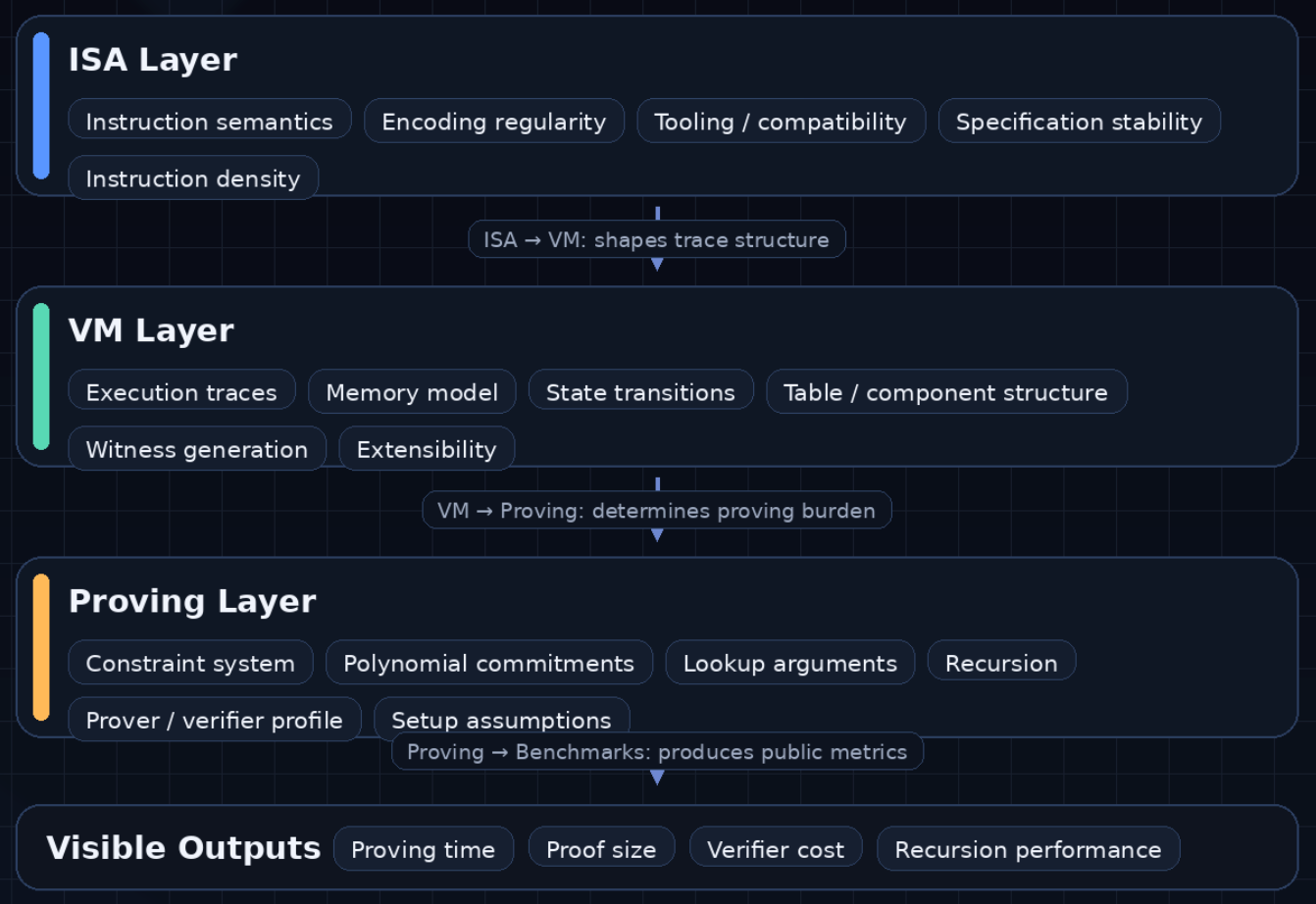

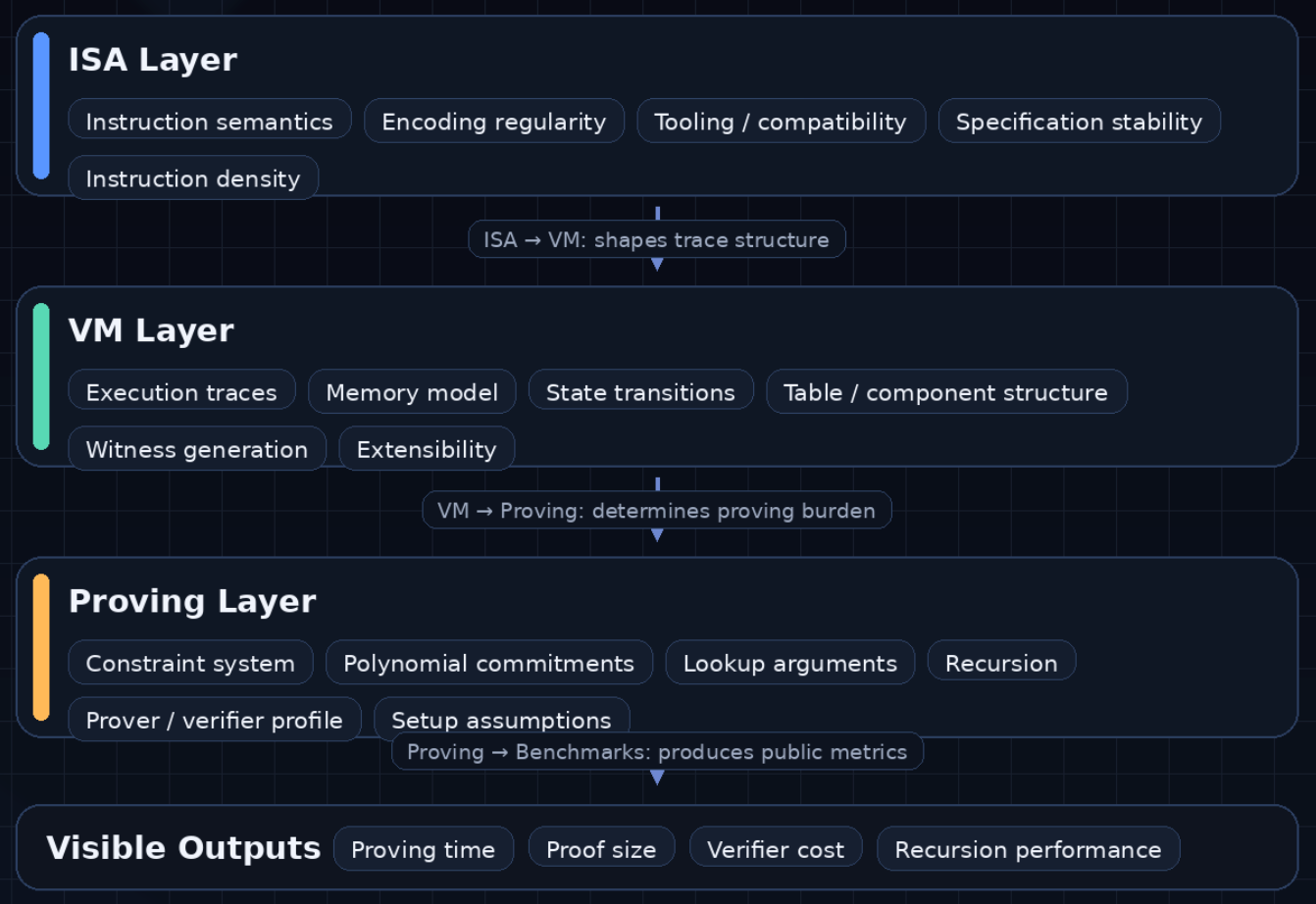

A recent ePrint paper, SoK: Understanding zkVM: From Research to Practice, offers a better framework for thinking about the space. Instead of treating a zkVM as a monolithic proving system, it breaks the stack into three interacting layers: the instruction set architecture (ISA), the virtual machine layer, and the proving layer. That improves the discussion immediately, because zkVMs should not be defined by a number. They are defined by how decisions at one layer propagate into every layer below it. We should ask not only whether a zkVM is fast, but what part of the stack is doing the work, where the costs are concentrated, and which tradeoffs are being made to get there.

Benchmarks are the visible consequence of many hidden choices - not necessarily the reflection of great architecture.

A strong result on a microbenchmark may reflect an efficient instruction set, a favorable memory model, a highly specialized proving backend, or simply a workload that happens to flatter one design more than another. A weaker result may conceal advantages that matter more in the long run: greater generality, cleaner semantics, a better recursion story, or a more realistic path toward supporting broader classes of programs.

This is especially true in ZK systems because costs move between layers. A cleaner ISA may simplify one part of the stack while complicating another. A VM architecture that is pleasant for modular constraint composition may impose different witness-generation costs. A proving backend that looks excellent on one trace layout may be much less attractive once memory pressure, lookup structure, or recursion requirements change. Performance claims are often real, but they are rarely complete.

The SoK’s layered framework is useful because it restores causality. It shifts the question from “Which system is faster?” to “Why is it faster? What exactly is being optimized, and what are the consequences elsewhere in the stack?”.

In conventional systems, ISA choice is often discussed in terms of compatibility, tooling, or developer familiarity. In a zkVM, it has much deeper consequences.

The ISA determines how high-level programs are expressed as low-level instructions. That alone would make it important. But in a zkVM, those instruction semantics must eventually be represented in execution traces and enforced in arithmetic constraints. Encoding regularity, instruction density, field layout, and specification stability all begin to matter in ways they do not in ordinary software environments. ISA choice shapes the structure of the trace and the complexity of the proving machinery that follows.

A general-purpose ISA offers obvious advantages: mature compilers, familiar programming models, and a lower barrier for proving ordinary programs. But those benefits come with baggage. These ISAs were not designed for algebraic efficiency. Their semantics can introduce irregularity that later appears as decoding overhead, trace inflation, or more complex constraints.

A more specialized ISA can make different tradeoffs. It may be easier to prove, more regular in execution, or better aligned with a particular proving architecture. But those gains can come at the cost of ecosystem maturity, software portability, and tooling depth.

This is why ISA discussions need to be treated seriously. They are not just about frontend ergonomics, but are relevant to who the zkVM is for, what workloads it wants to support, and what complexity it is willing to carry downstream.

If the ISA defines the language of computation, the VM layer determines how that computation is actually represented for proof generation.

This includes execution traces, memory organization, state transitions, and the decomposition of the system into components or tables. It is also the layer where many of the most important differences between zkVMs become visible.

Two systems can share a broadly similar ISA philosophy and still diverge sharply in how they structure execution. One may favor a highly modular architecture with separate components for CPU logic, memory, arithmetic operations, and specialized gadgets. Another may choose a more monolithic arrangement. One may optimize around lookup-heavy designs; another may prefer a different balance between trace regularity and circuit complexity. These choices shape witness-generation cost, prover parallelism, debuggability, and the practical difficulty of extending the VM over time.

Memory is often the clearest example. In modern ZK systems, memory handling is rarely incidental. It is one of the places where costs accumulate fastest and where elegant-looking architectures can run into real friction. A zkVM that appears efficient on arithmetic-heavy kernels may degrade under realistic workloads if its memory model creates too much overhead. Conversely, a system that looks less impressive in synthetic benchmarks may behave better under sustained use because its trace layout and memory architecture are more resilient.

This is why the VM layer deserves more attention than it usually gets. It is the layer that translates abstract semantics into concrete proving burden.

The proving layer is where execution traces become algebraic constraints and final proofs. It is also the layer most likely to dominate public discourse, because it is where recognizable terms live: STARKs, SNARKs, recursion, lookup arguments, polynomial commitments.

These choices matter enormously. They affect prover time, proof size, verifier cost, setup assumptions, hardware profile, and recursion feasibility. A strong proving architecture can unlock very real gains.

But the market often makes the opposite mistake: treating the proving layer as if it alone defines the zkVM.

It does not.

A powerful prover can be handicapped by a poor trace model. A clean VM architecture can be constrained by a backend that does not fit its tables well. A system can inherit attractive asymptotics while remaining awkward in practice because witness generation, memory movement, or recursive orchestration dominate the real cost. The layered view does not diminish the importance of the proving layer; it puts it into proportion.

This is also where public comparisons become easiest to misread. Prover performance depends on implementation maturity, hardware assumptions, batching strategy, field choice, recursion depth, and workload selection. Without context, benchmark numbers often function more as backend advertisements than as meaningful system comparisons.

The better question is not “Which proving system is fastest?”, but rather “Fastest for what trace structure, under what memory model, for which workload, with what recursion and verification constraints?”.

These are the questions serious builders should be asking.

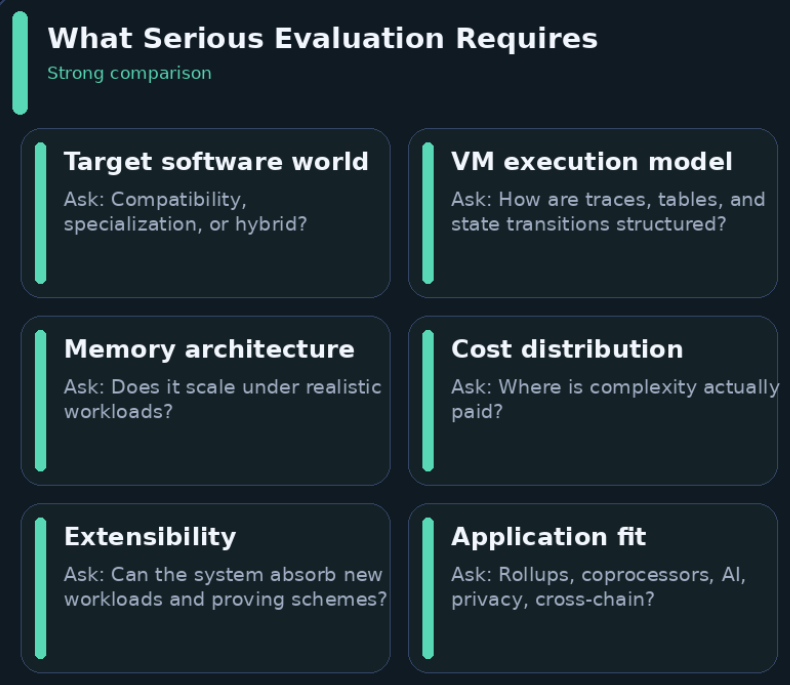

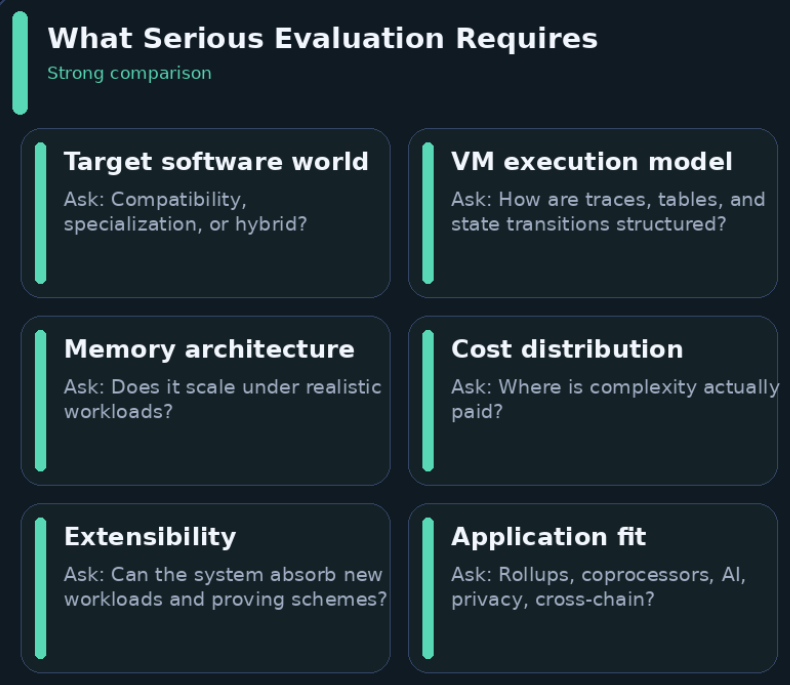

Once the layered framework is taken seriously, evaluation becomes more demanding - and much more useful.

At minimum, five questions matter:

There is no single best zkVM in the abstract. There are only systems whose layered choices fit certain constraints better than others.

The value of this framework is that it gives the industry a more honest standard.

A system like Ziren should not be judged by whether it “wins” zkVM benchmarks in the abstract. It should be judged by whether its choices across ISA, VM architecture, and proving design form a coherent stack for the environments it aims to serve.

That is a higher bar than isolated benchmark performance. It asks whether the execution model has been chosen with proving consequences in mind, whether the trace architecture and memory strategy reinforce that choice, whether the proving layer fits the execution model it serves, and whether the resulting developer surface is usable enough to matter in practice.

This is also a healthier way to discuss positioning. It avoids two equally weak habits: generic speed-posturing on one side, and self-congratulatory attempts to borrow authority from research taxonomies on the other. If a zkVM is strong, the strength should be visible in the coherence of its design.

That is the standard serious readers should demand.

The zkVM market currently rewards a style of communication that is easy to produce and difficult to trust: selective benchmark disclosure, inflated claims about universality, and vague declarations of “production readiness” without clear discussion of constraints.

That style is especially unhelpful in a field this heterogeneous. A benchmark without architectural explanation is only weakly informative. A performance claim without workload characterization is mostly marketing. A compatibility claim without details on toolchain maturity, unsupported behaviors, or memory tradeoffs says very little.

The field would benefit from rewarding a different style of communication: explicit tradeoff statements.

Tell readers why a specific ISA choice was made. Explain how the VM handles memory and trace composition. Be clear about which workloads the proving backend favors and which it does not. Say what the system is optimized for, and what it is not.

That kind of honesty would do more for zkVM adoption than another benchmark screenshot.

The zkVM market is often discussed as if it were based on a simple scoreboard: proving time, proof size, verifier cost, and maybe recursion performance. Two zkVMs can produce similar headline numbers while being built on entirely different assumptions about instruction semantics, memory handling, trace structure, recursion, and long-term extensibility. Looking only at the benchmark layer flattens the real engineering differences between systems, making them appear more interchangeable than they really are.

A recent ePrint paper, SoK: Understanding zkVM: From Research to Practice, offers a better framework for thinking about the space. Instead of treating a zkVM as a monolithic proving system, it breaks the stack into three interacting layers: the instruction set architecture (ISA), the virtual machine layer, and the proving layer. That improves the discussion immediately, because zkVMs should not be defined by a number. They are defined by how decisions at one layer propagate into every layer below it. We should ask not only whether a zkVM is fast, but what part of the stack is doing the work, where the costs are concentrated, and which tradeoffs are being made to get there.

Benchmarks are the visible consequence of many hidden choices - not necessarily the reflection of great architecture.

A strong result on a microbenchmark may reflect an efficient instruction set, a favorable memory model, a highly specialized proving backend, or simply a workload that happens to flatter one design more than another. A weaker result may conceal advantages that matter more in the long run: greater generality, cleaner semantics, a better recursion story, or a more realistic path toward supporting broader classes of programs.

This is especially true in ZK systems because costs move between layers. A cleaner ISA may simplify one part of the stack while complicating another. A VM architecture that is pleasant for modular constraint composition may impose different witness-generation costs. A proving backend that looks excellent on one trace layout may be much less attractive once memory pressure, lookup structure, or recursion requirements change. Performance claims are often real, but they are rarely complete.

The SoK’s layered framework is useful because it restores causality. It shifts the question from “Which system is faster?” to “Why is it faster? What exactly is being optimized, and what are the consequences elsewhere in the stack?”.

In conventional systems, ISA choice is often discussed in terms of compatibility, tooling, or developer familiarity. In a zkVM, it has much deeper consequences.

The ISA determines how high-level programs are expressed as low-level instructions. That alone would make it important. But in a zkVM, those instruction semantics must eventually be represented in execution traces and enforced in arithmetic constraints. Encoding regularity, instruction density, field layout, and specification stability all begin to matter in ways they do not in ordinary software environments. ISA choice shapes the structure of the trace and the complexity of the proving machinery that follows.

A general-purpose ISA offers obvious advantages: mature compilers, familiar programming models, and a lower barrier for proving ordinary programs. But those benefits come with baggage. These ISAs were not designed for algebraic efficiency. Their semantics can introduce irregularity that later appears as decoding overhead, trace inflation, or more complex constraints.

A more specialized ISA can make different tradeoffs. It may be easier to prove, more regular in execution, or better aligned with a particular proving architecture. But those gains can come at the cost of ecosystem maturity, software portability, and tooling depth.

This is why ISA discussions need to be treated seriously. They are not just about frontend ergonomics, but are relevant to who the zkVM is for, what workloads it wants to support, and what complexity it is willing to carry downstream.

If the ISA defines the language of computation, the VM layer determines how that computation is actually represented for proof generation.

This includes execution traces, memory organization, state transitions, and the decomposition of the system into components or tables. It is also the layer where many of the most important differences between zkVMs become visible.

Two systems can share a broadly similar ISA philosophy and still diverge sharply in how they structure execution. One may favor a highly modular architecture with separate components for CPU logic, memory, arithmetic operations, and specialized gadgets. Another may choose a more monolithic arrangement. One may optimize around lookup-heavy designs; another may prefer a different balance between trace regularity and circuit complexity. These choices shape witness-generation cost, prover parallelism, debuggability, and the practical difficulty of extending the VM over time.

Memory is often the clearest example. In modern ZK systems, memory handling is rarely incidental. It is one of the places where costs accumulate fastest and where elegant-looking architectures can run into real friction. A zkVM that appears efficient on arithmetic-heavy kernels may degrade under realistic workloads if its memory model creates too much overhead. Conversely, a system that looks less impressive in synthetic benchmarks may behave better under sustained use because its trace layout and memory architecture are more resilient.

This is why the VM layer deserves more attention than it usually gets. It is the layer that translates abstract semantics into concrete proving burden.

The proving layer is where execution traces become algebraic constraints and final proofs. It is also the layer most likely to dominate public discourse, because it is where recognizable terms live: STARKs, SNARKs, recursion, lookup arguments, polynomial commitments.

These choices matter enormously. They affect prover time, proof size, verifier cost, setup assumptions, hardware profile, and recursion feasibility. A strong proving architecture can unlock very real gains.

But the market often makes the opposite mistake: treating the proving layer as if it alone defines the zkVM.

It does not.

A powerful prover can be handicapped by a poor trace model. A clean VM architecture can be constrained by a backend that does not fit its tables well. A system can inherit attractive asymptotics while remaining awkward in practice because witness generation, memory movement, or recursive orchestration dominate the real cost. The layered view does not diminish the importance of the proving layer; it puts it into proportion.

This is also where public comparisons become easiest to misread. Prover performance depends on implementation maturity, hardware assumptions, batching strategy, field choice, recursion depth, and workload selection. Without context, benchmark numbers often function more as backend advertisements than as meaningful system comparisons.

The better question is not “Which proving system is fastest?”, but rather “Fastest for what trace structure, under what memory model, for which workload, with what recursion and verification constraints?”.

These are the questions serious builders should be asking.

Once the layered framework is taken seriously, evaluation becomes more demanding - and much more useful.

At minimum, five questions matter:

There is no single best zkVM in the abstract. There are only systems whose layered choices fit certain constraints better than others.

The value of this framework is that it gives the industry a more honest standard.

A system like Ziren should not be judged by whether it “wins” zkVM benchmarks in the abstract. It should be judged by whether its choices across ISA, VM architecture, and proving design form a coherent stack for the environments it aims to serve.

That is a higher bar than isolated benchmark performance. It asks whether the execution model has been chosen with proving consequences in mind, whether the trace architecture and memory strategy reinforce that choice, whether the proving layer fits the execution model it serves, and whether the resulting developer surface is usable enough to matter in practice.

This is also a healthier way to discuss positioning. It avoids two equally weak habits: generic speed-posturing on one side, and self-congratulatory attempts to borrow authority from research taxonomies on the other. If a zkVM is strong, the strength should be visible in the coherence of its design.

That is the standard serious readers should demand.

The zkVM market currently rewards a style of communication that is easy to produce and difficult to trust: selective benchmark disclosure, inflated claims about universality, and vague declarations of “production readiness” without clear discussion of constraints.

That style is especially unhelpful in a field this heterogeneous. A benchmark without architectural explanation is only weakly informative. A performance claim without workload characterization is mostly marketing. A compatibility claim without details on toolchain maturity, unsupported behaviors, or memory tradeoffs says very little.

The field would benefit from rewarding a different style of communication: explicit tradeoff statements.

Tell readers why a specific ISA choice was made. Explain how the VM handles memory and trace composition. Be clear about which workloads the proving backend favors and which it does not. Say what the system is optimized for, and what it is not.

That kind of honesty would do more for zkVM adoption than another benchmark screenshot.